In my previous blog, I explored the technical rabbit hole of running Llama.cpp in Termux on an old Android phone. While that was a rewarding experiment, let’s be honest: it wasn’t exactly “plug-and-play” for most people.

But it’s 2026, and the game has changed. You no longer need to be a Linux wizard to have a private, powerful AI in your pocket. In this guide, I’m covering the Top 4 ways you can run LLMs locally on your Android or iOS device—completely for free, with unlimited requests, and zero data leaving your phone.

1. Google AI Edge Gallery: The “One-Click” Starter

https://github.com/google-ai-edge/gallery

Google’s official showcase is the perfect starting point. It’s the easiest way to see what your phone is actually capable of. It’s optimized for mobile NPUs, meaning it’s fast and efficient for tasks like tone refinement, audio transcription, and image understanding.

The latest version now supports Gemma 4 and Gemma 3, models specifically “distilled” to run on mobile hardware without eating up your RAM.

The “Skills” Update: One of the coolest additions is support for SKILLS. You can now extend what the AI can do by pulling from a vast library at skills.sh. My personal favorite is the Humanizer skill—it’s great for making AI-generated text sound more, well, human.

Demo

https://youtube.com/shorts/1H6z2-K7gvw

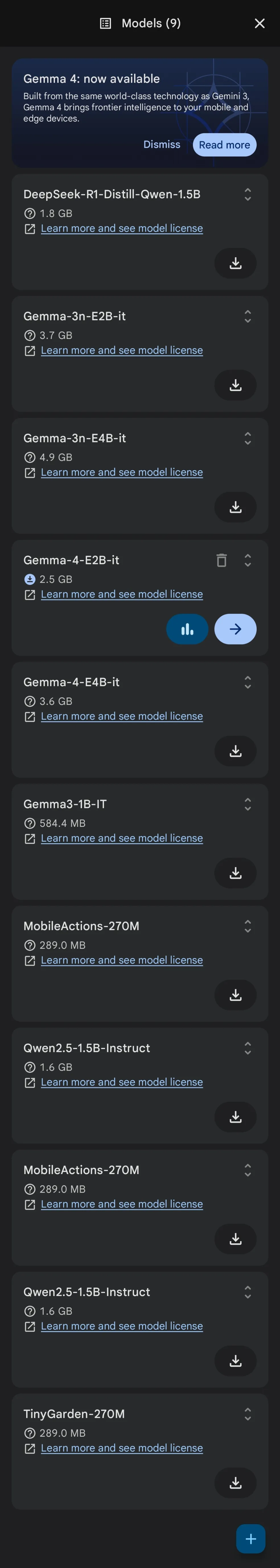

Models Available

- Gemma 4 E2B and E4B

- Gemma 3 1B

- Gemma 3n E2B and E4B

- DeepSeek R1 Distill Qwen 1.5B

- Qwen 2.5 1.5B

- LiteRT distilled models

Bundling your own model

If you’re feeling adventurous, you can even bundle your own models by following the LiteRT community guide. It walks you through converting models from Pytorch or other formats.

2. PocketPal AI

PocketPal AI is the one I keep coming back to. It’s built on llama.cpp (the industry standard), but the interface is actually designed for a human using a phone, not a developer staring at a terminal.

The ‘Pals’ feature is the standout here. Instead of one messy chat thread, you can set up different “Pals” with their own system prompts. I have one for coding and another for creative writing. It also handles the latest architectures like Qwen 3.5 and LFM 2.5, which are incredibly fast on mobile.

Why it works for me:

Easy Model Setup: You can download GGUF models directly within the app. For a smooth experience, I recommend any GGUF model (try Unsloth’s uploads) with Q4_K_M quantization.

100% Private: Once the model is on your phone, you can go into Airplane Mode and the AI won’t even notice.

Live Stats: It shows you tokens per second in real-time. It’s satisfying to see your phone’s hardware in action.

3. AnythingLLM: The Researcher’s Workspace

If you need your AI to actually do something with your files, AnythingLLM is a different beast. It’s less of a chatbot and more of a portable workspace.

The killer feature is On-Device RAG. You can feed it a PDF or text file sitting on your phone, and the AI will answer questions based only on that document. I’ve used this to summarize 50-page technical docs during flights with zero internet.

What sets it apart:

Chat with your docs: Local indexing means your data never touches a server.

Tools & Agents: It supports web scraping, calendar editing, and the Model Context Protocol (MCP), so it’s slowly becoming a true mobile agent.

The “Infinite Power” Trick: If your phone is struggling, you can connect it to a massive 70B model running on your home PC via API and use your phone as a remote window into that power.

4. Termux + Ollama: The Power User Shortcut

This is for my Android users who miss the command line. We’re using Termux to run Ollama directly on the device. It’s the closest you’ll get to a desktop experience on a mobile screen.

The Quick Setup:

Install Termux (use the version from F-Droid, not the Play Store).

Run

pkg install ollama.Type

ollama run qwen3.5:0.8b.

For the full setup guide, check out my other blog here: How to Install and Run Ollama in Termux on Android (2026 Guide).

That’s it. Ollama will auto-download the model and drop you into a CLI chat. You can run pretty much anything from the Ollama Library as long as your RAM can handle it. It’s the fastest way to test new models the second they drop.

Summary: Which one should you pick?

| Method | Best For | RAM & Hardware Specs | Supported Models & Classes | Ease of Use | Customization |

|---|---|---|---|---|---|

| Google Edge Gallery | Beginners & NPU Acceleration | 2GB - 4GB RAM (optimized for Tensor/Snapdragon NPUs like Pixel 8/9, Galaxy S24) | Gemma 3 1B/4B, Gemma 4, LiteRT distilled models | ⭐⭐⭐⭐⭐ | ⭐⭐ |

| PocketPal AI | Daily Chats & Custom Personas | 4GB - 8GB RAM (e.g., OnePlus 10/11, Xiaomi 13, Q4_K_M/Q8_0 GGUFs) | Qwen 3.5 (1.5B/3B), LFM 2.5 (1.2B), Llama 3.2 (1B/3B) | ⭐⭐⭐⭐ | ⭐⭐⭐ |

| AnythingLLM | Document RAG & Local Workspaces | 6GB - 12GB RAM (e.g., Galaxy S26 Ultra, iPhone 16 Pro Max) | Custom Local GGUF, Ollama endpoints, Cloud APIs | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Termux + Ollama | Developers & Command Line Users | 4GB - 8GB RAM (e.g., modern ARM64 devices running Android 10+) | Qwen 3.5 0.8B, LFM 2.5 1.2B, Llama 3 8B (Q2_K) | ⭐ | ⭐⭐⭐⭐ |

💬 Frequently Asked Questions (FAQ)

What are the best local LLM apps for Android and iOS in 2026?

The best user-friendly local LLM apps for mobile are Google AI Edge Gallery (optimized for Google NPUs) and PocketPal AI (built on llama.cpp with separate customizable ‘Pals’). For document-focused RAG, AnythingLLM is the leading choice.

Can I run local AI models on a mobile device without internet?

Yes. Once you download the respective app and GGUF/LiteRT models onto your device, you can completely disconnect from cellular or Wi-Fi (Airplane Mode) and run continuous local inference with complete privacy and zero data leakage.

What are the hardware requirements to run local LLMs on a smartphone?

To run local models smoothly, your phone should have a modern 64-bit ARM CPU with at least 6GB to 8GB of RAM. Lightweight quantized models (e.g. 0.8B to 1.5B parameters) require roughly 1.5GB of free memory, while larger 3B models need 3GB+ of free RAM.

The era of “Local-First” AI

The era of relying on expensive subscriptions and cloud-tracking for AI is ending. Whether you want a simple one-click app or a full terminal setup, you can now carry a “God-tier” brain in your pocket for $0.

Read about more on Local AI and Self-Hosting on nkaushik.in.

Happy (Local) Chatting!